The Three-Armed Medic

The three-armed medic is an autonomous assistant for paramedics and EMTs. Sometimes an extra hand could be useful for doing tasks like passing tools. In this project combined state-of-the-art computer vision with robot motion planning. The robot would respond to verbal commands by locating the desired medical tool in the scene and then picking it up and placing it in the user’s hand. The system runs on ROS2 and uses a mixture of learned, analytically derived, and algorithmically based approaches to task and motion plan.

We presented a workshop paper on this project at The Evolving Landscape of Surgical Robotics workshop at ICRA 2025.

Autonomous Task Reasoning

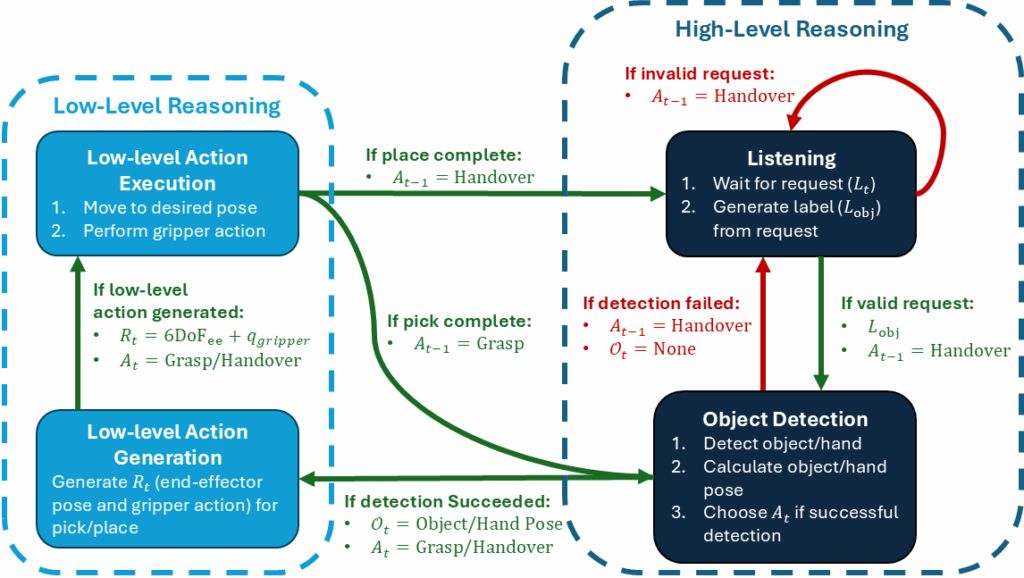

The robot could be in different states or modes. A state machine provided rules for how the robot would transition between those modes and what skills the robot would perform to change the world state and transition to a new mode.

Object Detection

Object detection and pose estimation was done using a variety of state-of-the-art computer vision models. Hands were detecting using Google’s MediaPipe model and hand pose was calculated using the landmarks on the palm of the hand. Medical objects were detected and masked using a fine-tuned YOLOv9 model. Object poses were found by aligning masked point clouds with meshes of the objects using an iterative closest point (ICP) algorithm.

Motion Planning

The robot senses the environment using a fixed RGB-D camera. This camera is used to locate both objects and obstacles in the scene. The MoveIt motion planner generates collision free trajectories to pick and handover the requested tool.